Authors / metadata

DOI: 10.36205/trocar7.2026004

Abstract

Artificial intelligence (AI) is rapidly transforming gynecologic robotic surgery by enhancing precision, visualization, and intraoperative decision-making. While robotic surgery improves dexterity and minimizes tremors, it lacks tactile feedback and standard performance assessment. Integrating AI helps overcome these limitations, potentially improving both surgical outcomes and training. This comprehensive literature review analyses studies from 2020 to 2025 on clinical applications of AI in gynaecologic robotic surgery, identified through searches of PubMed, Scopus, and IEEE Xplore. Applications discussed include AI-assisted autonomy (e.g., automated suturing and camera control), augmented reality for real-time imaging, machine learning–based workflow and gesture recognition. AI-powered tactile feedback systems begin to address the absence of haptic sensation, improving tissue differentiation. AI also shows promise in postoperative recovery by enabling personalized rehabilitation and early complication detection. These advances support safer, more efficient procedures and provide objective metrics for surgical training. However, challenges remain involving large data requirements, ethics, and clinician accountability. Despite this, AI integration promises remarkable improvements in surgical precision, outcomes, and education in gynecologic robotic surgery.

Introduction

AI has witnessed tremendous growth in the healthcare sector, owing to the development of machine learning algorithms and the availability of extensive medical databases. These technologies have revolutionized how we understand, diagnose, and treat diseases. AI is capable of analysing vast and complex datasets using advanced computational architectures (1). AI integration into robotic surgery is poised to transform gynecologic surgical practices. Robotic surgery, employing computer-controlled arms, enhances surgical dexterity, visualization, and minimizes hand tremor compared to traditional laparoscopy. These systems are widely adopted across specialties including gynecology, oncology, orthopaedics, and general surgery. AI has further enriched the operating experience by automating procedural tasks, providing better surgical field visualization, and providing real-time surgical assessment and feedback. The advantages of incorporating AI in robotic surgery encompass enhanced surgical accuracy, reduced surgeon fatigue, and increased patient safety. However, barriers to widespread adoption persist, including concerns related to cost-effectiveness, accessibility, the learning curve and training requirements, and ethical concerns surrounding AI-based decision- making in surgery. This paper is a detailed survey of AI-driven robotic systems, highlighting their advantages, limitations, and the future of AI in Robotic surgery (2).

Material and Methods

This narrative review was conducted by searching electronic databases including PubMed, Google Scholar, EMBASE, Web of Science, Scopus and IEEE Xplore from 2020 to 2025. Search terms incorporated Boolean operators and keywords such as: “Robotic Surgery” AND “Gynecology”, “Artificial Intelligence” AND “Robotic Surgery”, “Deep Learning”, “Machine Learning”, “Autonomous Camera Positioning”,“Augmented Reality AND Gynecological Surgery”. Inclusion criteria were original research articles, reviews, and relevant conference papers published in English addressing AI applications in gynaecologic robotic surgery. Studies were excluded if they lacked clinical applicability or focused solely on technical engineering aspects without surgical context. Representative studies were selected. 31 articles underwent full-text assessment. Although a formal systematic review protocol was not followed, efforts were made to ensure comprehensive coverage of recent advances.

Background

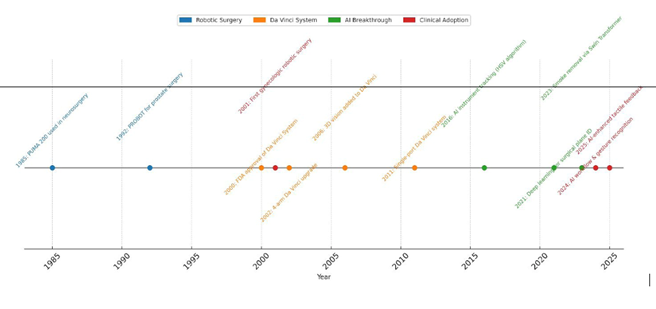

Robots have been utilized in surgical procedures for over 35 years, with rapid advancements occurring particularly in the last two decades. The first medical robot, the Programmable Universal Machine for Assembly (PUMA 200), was employed in 1985 for neurosurgical biopsies. Initially developed to enable remote operations, robotic platforms allowed surgeons to treat patients from distant locations, such as military battlefields. Over time, these robotic systems became increasingly refined, offering enhanced precision, control, and accuracy. A major breakthrough in robotic surgery occurred with the FDA approval of the Da Vinci Surgical System in 2000. Originally designed with three robotic arms and later upgraded to four in 2002, this system integrated Automated Endoscopic System for Optimal Positioning (AESOP) for improved camera control alongside surgical arms capable of seven degrees of movement The addition of a 3D camera in 2006 and single-port access tools in 2011 further addressed many of the limitations inherent in laparoscopic surgery, enhancing surgical dexterity and visualization (3). Today, robotic-assisted surgery is widely used in gynecology for both benign and malignant conditions, including hysterectomy, myomectomy, and pelvic exenteration.

Discussion

Integration of AI in robotic surgery

The integration of artificial intelligence (AI) and machine learning (ML) has further propelled advancements in robotic surgery by enabling automation, real-time analytics, and enhanced intraoperative decision-making. First conceptualized in 1956, AI now broadly encompasses intelligent technologies capable of simulating human cognition, while ML enables these systems to learn and adapt from large datasets. AI systems in robotic surgery are being developed by focusing on the collection, preparation, and annotation of high-quality, multimodal data from real surgical procedures. This process involves capturing detailed video data, automating the extraction of relevant events, recording surgeon movements in 3D and annotating video data to create datasets essential for developing reliable AI models for robotic surgeries (4). Levin et al in his study “Introducing surgical intelligence in gynecology: automated identification of key steps in hysterectomy” demonstrated practical feasibility and high accuracy of incorporating AI-driven step identification into robotic gynecological surgeries (5).

Intraoperative AI based assistance in Robotic Gynaecologic surgery

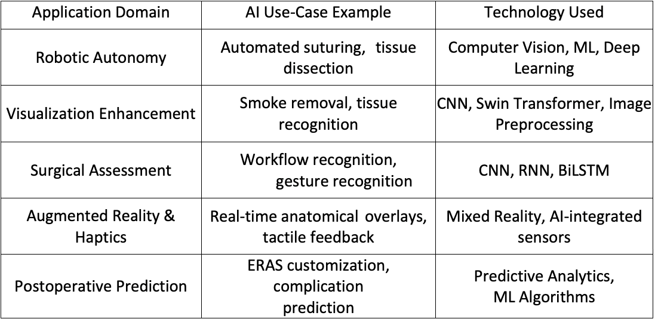

There is significant potential to enhance the accuracy, effectiveness, and accessibility of robotic surgery through the incorporation of AI (Table 1).

Broadly, artificial AI enabled intraoperative improvements fall into three groups: robotic autonomy, surgical field enhancement, and surgical assessment/feedback.

Robotic autonomy

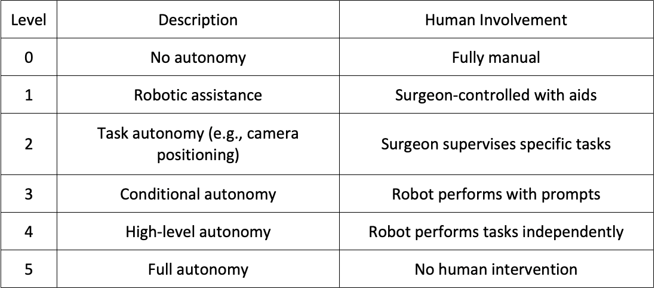

The ultimate goal for robots is to become increasingly autonomous. The International Organization for Standardization (ISO 8373:2012) defines autonomy as: “an ability to perform intended tasks based on current state and sensing without human intervention”. Thanks to substantial advances in machine learning (ML), deep learning (DL), computer vision (CV), and natural language processing (NLP) over recent decades, robotic autonomy is increasingly becoming a practical reality. By automating repetitive tasks, AI reduces cognitive load, allowing surgeons to maintain focus, during critical phases of surgery. AI algorithms analyse real-time data during surgery to anticipate tissue activity and dynamically adjust robotic movements accordingly (2). A global consensus defines six levels of surgical autonomy, ranging from fully manual (Level 0) to complete autonomy (Level 5).

In gynecologic surgery, research focuses on automating subtasks such as camera control and tissue dissection, particularly in robotic hysterectomy.

Tissue dissection: in robotic hysterectomy, the uterus must be dissected at three levels of connective vascular pedicles. Autonomous tissue dissection enables more precise excision with a reduced risk of inadvertent injury to vital structures. Suturing: suturing is a repetitive but essential component of procedures such as myomectomy and hysterectomy, often time-consuming and physically demanding. Its repetitive nature and clearly defined procedural constraints make it an ideal candidate for automation.

Autonomous camera positioning: with tele-robotic surgical systems, surgeons must periodically halt the procedure to manually adjust camera positioning. At times, one or both instruments may move outside the camera’s field of view, potentially leading to delays and errors. Amini Khoiy K et al developed and validated a marker-free, Hue Saturation Value (HSV)-based segmentation algorithm for real-time surgical instrument tip tracking, enabling autonomous cameraman robot control in laparoscopic surgery with high accuracy and low latency, demonstrating promising results for future practical applications (6). In robotic hysterectomy, precision is crucial due to the proximity of vital structures. AI-driven systems now support real-time recognition of surgical phases, automated instrument tracking, and increasingly autonomous task execution. Progress in real-time decision-making and error management suggests that fully autonomous procedures may soon be possible, offering greater precision and safety (7).

Surgical field enhancement

Accessing deep anatomical spaces in gynecologic surgery presents significant risk. AI-enhanced robotic systems, combined with real-time image processing, improve identification of critical structures and instruments. Tools such as AI-driven mixed reality platforms allow preoperative mapping (e.g., using MRI data for uterine fibroids), personalized surgical planning, and intraoperative 3D visualization, thereby enhancing safety and outcomes. Specifically, AI-based online pre-processing systems have been developed to deblur and colour-correct live camera feeds, thus enhancing surgical visualization (2). Gynecological procedures such as hysterectomy and myomectomy often involve electrosurgical devices that generate substantial amounts of smoke, which can obscure the surgical field. Consequently, surgeons may need to periodically pause the procedure to clear the smoke, disrupting workflow and prolonging operative time. To address this issue, Wang et al. proposed a method combining Convolutional Neural Networks (CNN) and Shifted Windows (Swin) transformers to effectively remove smoke from the surgical view, thereby achieving smoke-free, clear intraoperative visualization (8). Beyond improving the operative view, AI also aids surgeons in recognizing and differentiating native tissue structures. The success of any gynecologic surgery heavily relies on accurately identifying surgical avascular planes such as the vesicouterine, pararectal, paravaginal, presacral, and retropubic spaces. Precise delineation of these planes is critical to prevent inadvertent injury to vital arteries, veins, and nerves. Kumazu et al developed and validated a deep learning-based AI model that automatically segments loose connective tissue fibers to define safe dissection planes during robot-assisted gastrectomy to accurately define surgical planes, thereby minimizing the risk of inadvertent injury. As in gastrectomy, this technology can serve as a visual co-pilot for the surgeon, supporting intraoperative decisions in minimally invasive gynecologic procedures (9). In gynecologic oncologic surgeries, AI-driven technologies like Full-Field Optical Coherence Tomography (FF-OCT) and Dynamic Cell Imaging (DCI) have been developed to provide high- resolution tumor margin assessments in under five minutes. These advances increase surgical accuracy and reduce unnecessary tissue removal. A significant challenge in gynecologic oncology is cancer recurrence, which can be markedly reduced through this enhanced precision that ensures clear surgical margins during tumor excision (10).

Augmented reality and tactile feedback

Alongside advances in autonomy, AI also strengthens the surgeon’s ability to visualize and navigate complex anatomy. Augmented Reality (AR) is increasingly applied in robotic gynecologic surgeries such as myomectomy, polypectomy, and adenomyomectomy to enhance precision in localizing intrauterine structures, a task traditionally reliant on tactile palpation. Since imaging modalities like MRI and ultrasonography cannot often fully distinguish pathological tissues from normal ones, AR integrated with robotic systems addresses the challenge in endoscopic surgery, reducing the risk of incomplete removal of fibroids or polyps (11). For instance, Liu F et al. developed a mixed reality system combining AI-driven automatic segmentation and 3D reconstruction of pelvic MRI data to accurately map uterine fibroids and their vascular anatomy. This supports personalized preoperative planning, tactile-feedback- based surgical simulation, and real-time intraoperative 3D visualization (12). Similarly, Hofman et al. demonstrated a pioneering AI-assisted augmented reality system that manages surgical instrument occlusion with deep learning models, enhancing the seamless integration of AR during robotic surgery (13). Addressing the critical deficit of tactile sensation in minimally invasive procedures, AI enhanced tactile intelligence systems have been introduced. Doria et al. explored haptic interfaces equipped with AI integrated piezoelectric sensors in teleoperated robotic myomectomy, these facilitate localization of submucosal or intramural lesions and differentiate tissue properties through enhanced haptic feedback (14). This fusion of AR, AI, and advanced haptics enables safer, more accurate robotic gynecologic surgeries by compensating for the lack of direct palpation and improving intraoperative tissue discrimination.

Surgical assessment and feedback

Traditionally, surgical performance was evaluated using clinical outcomes such as histopathology, morbidity, and mortality. Recent advances, however, have established intraoperative performance analysis by AI as an objective tool for evaluating skills and guiding targeted interventions.

Workflow Recognition

AI driven models are increasingly used for automated analysis of surgical workflows using minimally invasive surgery video recordings. Technologies like Convolutional Neural Networks (CNN) and Recurrent Neural Networks (RNN) enable accurate, step-by-step recognition of procedural stages, capturing both sequence and duration of each phase. This spatiotemporal analysis helps detect deviations and identify prolonged or challenging steps that may indicate complications, prompting timely alerts or guidance for the team. For standardized procedures such as hysterectomy, workflow evaluation incorporates data on camera movements and energy usage, collected via platforms like the Intuitive Data Recorder. Recent developments, such as Objective Performance Indicators (OPIs), enable structured, quantitative assessment of each surgical step, supporting the creation of training curricula and monitoring trainee progress while highlighting situations requiring additional attention or skill development (15–17).

Gesture Recognition

Automatic recognition of fine-grained surgical gestures has become essential for autonomous robotic surgery systems and training platforms. AI models, notably Bidirectional Long Short- Term Memory (BiLSTM) networks, analyse gestures in tasks like suturing, demonstrating increased accuracy and efficiency over conventional assessment methods. This technology enhances both real-time feedback and interactive training environments, supporting surgeon skill evaluation and decision-making. The integration of gesture recognition into surgical education and robotic assistance promises to further improve procedural safety, precision, and learning outcomes (18).

AI Based postoperative recovery management

AI and machine learning (ML) are transforming Enhanced Recovery After Surgery (ERAS) protocols by enabling data-driven, individualized patient care. These technologies analyse large volumes of preoperative, intraoperative, and postoperative data to provide real-time risk predictions, allowing clinicians to proactively identify patients at higher risk for complications and adjust interventions accordingly. AI/ML models personalize ERAS elements such as fluid management, pain control, and nutritional support by considering each patient’s demographics, medical history, genetics, and real-time physiological data. Outcome prediction is another key benefit: AI/ML algorithms identify patterns and risk factors in complex datasets, predicting important outcomes like length of hospital stay, likelihood of readmission, or postoperative complications. These insights support timely, targeted interventions and efficient resource allocation, ensuring intensive support to be focused on those who need it most. Overall, the integration of AI and ML into ERAS protocols promises safer recoveries and reduced hospital stays. However, their success depends on overcoming challenges in data access, privacy, and the need for collaboration between clinicians and data scientists, ensuring that AI serves as a supportive tool and not a replacement for human expertise (19). Although there is currently a lack of large, high-quality Randomized Controlled Trials (RCTs) that directly compare patient outcome in AI augmented robotic surgery to robotic surgery without advanced AI in gynecology. Most available RCTs and meta-analyses in the field compare robotic surgery (which may involve some basic AI functions) to conventional laparoscopy or open surgery.

AI is surgical training

AI will have a transformative impact on the training of junior surgeons in the field of gynecological endoscopy. AI enhanced educational tools and feedback systems offer several advantages over traditional training, enabling more efficient skill acquisition and fostering individualized growth. AI powered video analysis platforms can objectively assess surgical performance, track progress over time, and deliver immediate, targeted feedback. Ma et al. (2023) reported that the use of AI based video feedback led to substantial improvements in needle handling skills, particularly among underperforming trainees. This study highlights the ability of AI tools to identify specific areas of weakness and deliver actionable feedback that accelerates learning curves (20). Similarly, Laca et al. (2023) found that AI guided feedback during robotic suturing tasks was especially valuable for novice trainees, who benefited significantly from personalized recommendations. Their findings underscore the importance of adaptive, data-driven feedback in optimizing skill development and building confidence among junior surgeons (21). Another tool developed to enhance simulation-based training is the Virtual Operative Assistant (VOA). It provides junior surgeons with objective, automated feedback on their performance in simulated endoscopic procedures, accelerating skill acquisition by identifying areas for improvement based on expert proficiency benchmarks (22).

Limitations of AI

Despite its promise, AI’s application in gynecologic robotic surgery faces several key limitations. The accuracy of AI systems depends on large, high-quality, and diverse datasets, biased or incomplete data can undermine generalizability and perpetuate healthcare disparities. Many AI models lack robust external validation, limiting their reliability across different clinical environments. Furthermore, the complexity and “black box” nature of sophisticated algorithms hinder transparency, making it difficult for clinicians to interpret or trust AI generated recommendations. This lack of explainability can reduce clinician confidence and complicate patient communication. Finally, limited clinician familiarity with AI technologies poses a challenge to effective integration into surgical practice, emphasizing the need for better educational resources and intuitive interfaces (23–25).

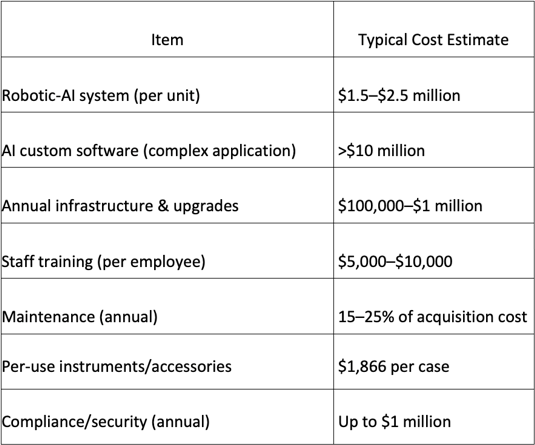

Financial implications

The cost of implementing AI in gynecological robotic surgery is substantial and multifaceted, involving not just the acquisition of robotic systems but also ongoing expenditures on AI software, compliance, training, and maintenance (26–28). However, compared to standard laparoscopic or open surgical techniques, the adoption of robotic AI platforms has yet to consistently demonstrate clear cost-effectiveness in all settings. Traditional approaches generally involve lower capital and operating costs and may deliver comparable outcomes for certain procedures, especially where experienced surgical teams are available.

Social, ethical and legal challenges

Accountability: The introduction of AI shifts traditional accountability models in surgery. Although the final treatment decision often rests with the human surgeon, when outcomes are influenced by AI recommendations, questions arise: Who is responsible if a patient is harmed, the device manufacturer, the software developer, or the clinician? Current frameworks tend to place ultimate responsibility on the physician, yet clinicians may not fully control or comprehend the AI’s internal logic. This shared or distributed accountability has profound implications for medical liability and patient safety. Example: in one instance, an AI driven recommendation to alter the surgical technique based on intraoperative data was followed by the surgeon, but led to an unexpected complication. Because the surgeon lacked access to the precise rationale behind the AI’s recommendation, assigning fault became complex and legally contentious. Addressing these social and legal challenges will be as crucial as the technical advances. Careful regulation and ethical oversight will determine how AI ultimately shapes the future of gynecologic robotic surgery (23).

Explainability and Trust in AI Predictions: Surgeons must be able to interpret and trust AI outputs, especially in high-risk clinical environments. A lack of transparency can erode confidence, inhibit adoption, and even endanger patient safety. Explainable AI methods, such as local interpretability tools or dashboards displaying variable importance, help demystify AI recommendations and empower clinicians to question or override them when necessary. Models equipped with interpretable features enable surgeons to validate suggestions and build informed trust in AI-driven assistance (25). In a recent surgical AI study, the model’s output was accompanied by explanations showing how tumor size, surgical type, and patient comorbidities contributed to predicted complexity. This transparency was valued by surgeons, who could then tailor their intraoperative approach accordingly (29).

Global Regulatory Frameworks: AI use in healthcare, and specifically in surgical robotics, is subject to rapidly evolving global regulatory oversight. AI-powered surgical devices are usually classified as high-risk medical devices due to their direct impact on patient health and safety. The U.S. FDA regulates AI enabled medical devices through draft guidance requiring clinical trials to ensure safety and efficacy before approval. Devices must be designed to reduce risks from software errors, user mistakes, and faulty data. Manufacturers are obliged to provide maintenance and update information to users and report device malfunctions to the FDA within 30 days. Meanwhile, the UK’s MHRA emphasizes using established frameworks to guarantee AI medical devices are safe, effective, and suitable for all intended populations. It also highlights the need for transparent, interpretable AI models that are robust, testable, or thoroughly validated (30).

Limitations of the study

This review synthesizes the current landscape of AI applications in gynecologic robotic surgery, but it is subject to several important limitations. First, most of the available evidence derives from early-stage studies, technical feasibility reports, and retrospective analyses, with relatively few large-scale randomized controlled trials directly assessing clinical outcomes. Consequently, the generalizability of many findings is limited, and the long-term impact of AI systems on patient outcomes and surgical training remains to be fully established. Additionally, the rapidly evolving nature of AI technologies means that some innovations described may become outdated or surpassed by newer algorithms and platforms in the near future. Another constraint is the heterogeneity in reporting standards, endpoints, and evaluation metrics across studies, which complicates direct comparison and meta-analysis. Ethical, legal, and data privacy considerations, though touched upon, are evolving and were not exhaustively assessed in this review. Finally, the literature search was limited to English- language articles published between 2020 and 2025, potentially excluding relevant earlier work or non-English studies. These limitations highlight the need for ongoing, high-quality clinical research and international consensus to optimize the safe, effective, and equitable integration of AI into gynecologic robotic surgery.

Conclusion

AI is increasingly integrated into gynecologic robotic surgery, offering substantial advancements in surgical precision, visualization, and workflow optimization. AI driven automation of repetitive tasks such as suturing and camera control reduces surgeon fatigue and enhances procedural consistency. Augmented reality combined with AI facilitates improved intraoperative visualization and lesion localization, addressing challenges posed by limited tactile feedback in minimally invasive surgery. Furthermore, AI enabled tactile intelligence and haptic feedback systems compensate for the loss of direct palpation, improving tissue discrimination and surgical safety. Machine learning based workflow and gesture recognition provides objective performance metrics that support surgical training and quality improvement. Despite these promising advancements, challenges remain in managing large datasets, ensuring ethical use, and integrating AI seamlessly across diverse clinical environments. Questions about standardization, long-term patient outcomes, and accountability hinder full adoption. Future research should emphasize robust clinical validation through large-scale trials, development of explainable AI models to build clinician trust, and establishment of regulatory frameworks that ensure patient safety without impeding innovation. Multidisciplinary collaboration is essential to overcome existing barriers and harness AI’s full potential in gynecologic robotic surgery. Ultimately, AI integration heralds a new era of safer, more efficient, and patient-centred robotic gynecologic surgery, promising meaningful improvements in clinical practice. Advances. in AI have already enhanced precision and training in gynecologic robotic surgery. While challenges remain, ongoing research and clear clinical standards will determine the success of these technologies in the operating room.